By Antoine Tardif, CEO & Founder of Unite.AI

The rapid evolution of generative AI has produced tools capable of creating images, music, and short video clips. But a new class of platforms is beginning to tackle something much more ambitious: turning a written story into a coherent film.

One of the newest entries into this space is PAI, a cinematic storytelling engine developed by Utopai Studios. Rather than focusing on short visual clips, the platform is designed around narrative workflows—allowing creators to move from script to characters, to storyboard, and finally to a finished multi-scene video sequence within a single interface.

After spending time working inside the platform, it becomes clear that tools like this represent something fundamentally different from earlier AI video models. Instead of prompting individual scenes or clips, the system begins with the story itself.

My first step was uploading part of a screenplay.

The concept behind the platform is surprisingly intuitive. Instead of asking users to prompt every individual shot, PAI analyzes the story and extracts the core components of the production. Characters, environments, emotional tone, and narrative beats are all identified automatically.

From there, the platform begins constructing a cast of characters.

Each character receives a persistent visual identity tied to the story, allowing them to appear consistently across every scene in the sequence. Maintaining this type of continuity has been one of the biggest technical challenges in AI video generation. Characters often change appearance between clips, environments shift unpredictably, and the narrative flow breaks down.

PAI attempts to solve this by anchoring character design and scene contextectly to the story structure itself.

Once the initial cast is generated, creators can refine them through natural language instructions or visual adjustments until the characters match their creative vision. The experience feels less like prompting an AI model and more like working with a digital casting and art department.

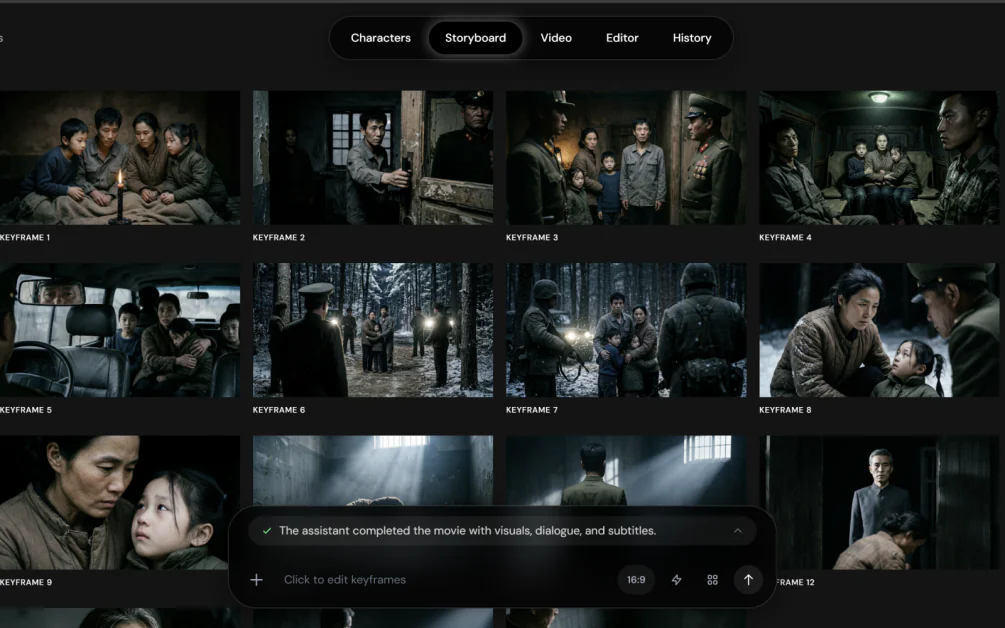

Once the characters were defined, the platform automatically moved into the next phase of the filmmaking process: storyboarding.

Instead of manually designing each shot, the system transformed the screenplay into a visual storyboard. A grid of scenes appeared on screen, each representing a key moment extracted from the narrative.

This stage felt remarkably similar to the early pre-production phase in traditional filmmaking. Directors typically use storyboards to plan camera angles, pacing, and visual composition before filming begins. In this case, the storyboard emerged automatically from the script itself.

Each shot could then be edited, reordered, or refined using natural language prompts. If a scene needed a different camera angle, lighting style, or emotional tone, it could be adjusted before the system moved into the final stage of video generation.

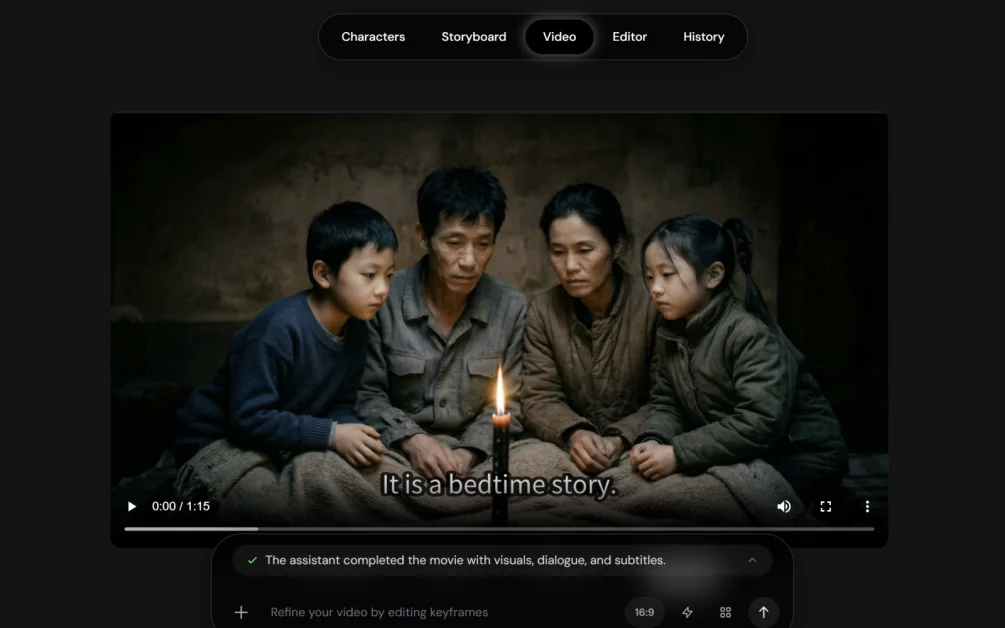

Once the storyboard was finalized, the platform generated the full video sequence.

With a single command, the storyboard was transformed into a cinematic video composed of multiple scenes. The system currently supports multi-scene sequences of up to sixteen shots and videos approaching one minute in length—significantly longer than many existing AI video tools.

Where most AI video systems generate isolated clips, PAI attempts to maintain continuity across the entire sequence. Characters remain recognizable from one scene to the next, environments remain consistent, and the story unfolds as a cohesive narrative rather than a collection of disconnected visuals.

Watching the process unfold feels less like creating a video clip and more like observing a miniature film pipeline condensed into a single workflow.

While the overall experience is impressive, there are still areas that could improve.

One surprise during the process was the lack support for industry-standard screenplay formats such as Final Draft (.fdx) or the open screenplay format Fountain, which many screenwriters use when writing scripts.

Instead, the screenplay had to be uploaded in a simpler format. Given how central screenwriting software is to the filmmaking process, support for these formats would likely make the platform more appealing to professional writers and studios.

That said, once the script was uploaded, the rest of the process—from character generation to storyboard creation to final video production—was smooth and surprisingly intuitive.

The company behind PAI has positioned the platform as more than just another generative video tool.

Utopai Studios was created to develop AI models specifically designed for narrative filmmaking, focusing on the types of workflows that professional creators rely on when developing long-form stories.

Several key design goals appear to shape the platform:

This approach reflects a broader shift in how AI video is evolving. Early tools focused primarily on generating short clips or experimental visuals. The next wave of platforms is beginning to tackle the much harder challenge of sustained storytelling.

What makes platforms like PAI compelling is how quickly a written idea can evolve into something visual.

A story that previously existed only as text can suddenly become a cast of characters, a sequence of scenes, and eventually a short film. What once required an entire team of artists, editors, and production crews can now begin with a single script and a few creative prompts.

That does not mean AI will replace filmmakers. Instead, tools like this may significantly expand the ways stories can be explored during early development.

Screenwriters could visualize scenes before pitching them.ectors might experiment with different visual styles during pre-production. Independent creators could produce cinematic content without needing access to traditional studio resources.

In many ways, these platforms resemble the early days of digital filmmaking or desktop publishing—technologies that dramatically lowered the barrier to creative production.

As AI video generation continues to improve, it is likely that more platforms will emerge that focus specifically on narrative storytelling rather than isolated clips.

New tools will appear for script-to-screen workflows, character generation, virtual cinematography, and automated editing pipelines. Together, they may form an entirely new creative ecosystem around AI-native filmmaking.

We are already beginning to see early signs of this shift, with platforms experimenting with distribution networks dedicated entirely to AI-generated films and series. These early efforts offer a glimpse into how this new form of cinema might evolve, including services such as Escape.ai, which is exploring a Netflix-style environment built specifically for AI-generated video content.

While the technology is still in its early stages, the direction is becoming clear. The tools that once generated isolated clips are beginning to evolve into full creative pipelines.

And if platforms like PAI continue to improve, the journey from screenplay to cinema may soon become dramatically faster—and accessible to far more storytellers than ever before.